[Xangle Digest]

Translated by Rhea

※ This article contains content originally published by a third party on August 12, 2022. Please refer to the bottom of the article for the copyright notice regarding this content.

Given below is an excerpt from the original report. Please click the link at the end of the article for the full report (currently available in Korean only).

[Table of Contents]

- Web3 and Blockchain Scalability

- Will Layer 2 be the Key to Solving the Scalability Problem?

- Major Layer 2 Projects

- Layer 2 as an Investment Vehicle

- Forecasts and Issues

Layer 2 Ecosystem, a Key to Solving the Blockchain Scalability Problem

Web3 and Blockchain Scalability

Bear markets always come with aches and pains. The prices of Bitcoin and Ethereum plummeted by over 70% of their once peak price, dropping below their price as of January 2018. DeFi protocols’ TVL and the scale of investments by the crypto venture capitals were not immune to such a blow either. Despite such a turbulent price fluctuation, it is still relevant and critical to have a clear understanding of how the value of blockchain networks is changing. We can look to past cases for more insight.

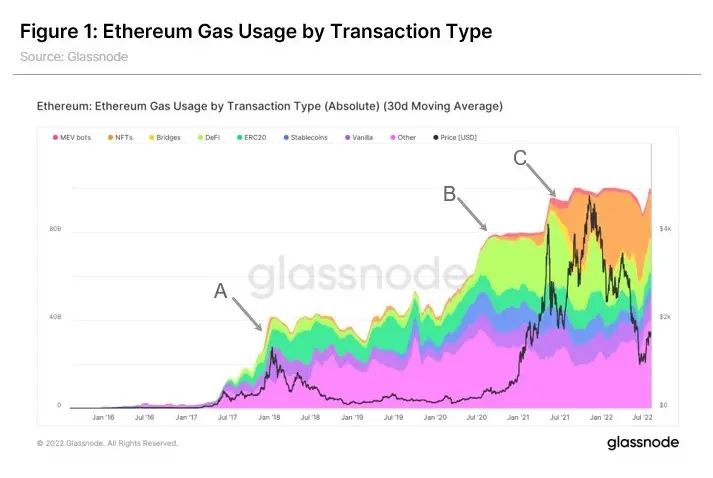

① Importance of Generating New Demands

Historically, the market value of crypto assets has grown along with the development and spread of new use cases. Figure 1 shows the Ethereum price and gas fee by transaction type. The crypto bull market of 2017 and 2018 (Point A on the graph) came with an increase of new token (ERC20) ICOs in terms of transactions. The upward cycles of 2020 and 2021 show that the growth in transactions related to two megatrends, DeFi Summer (Point B) and NFT Boom (Point C), drove up the entire transaction volume. Also, the rise of crypto asset prices and the transaction activity level displays the tendency to exert mutual influence over each other rather than simply being two different events that happen to take place one after the other in sequential order. The emergence and active adoption of new use cases increase network activity and act as an element of support backing the value of crypto assets. It further goes to show that the next bull market is also highly likely to be accompanied by an active expansion of use cases rather than simply a rise in asset prices.

② Lessons Learnt from the Case of Internet Adoption

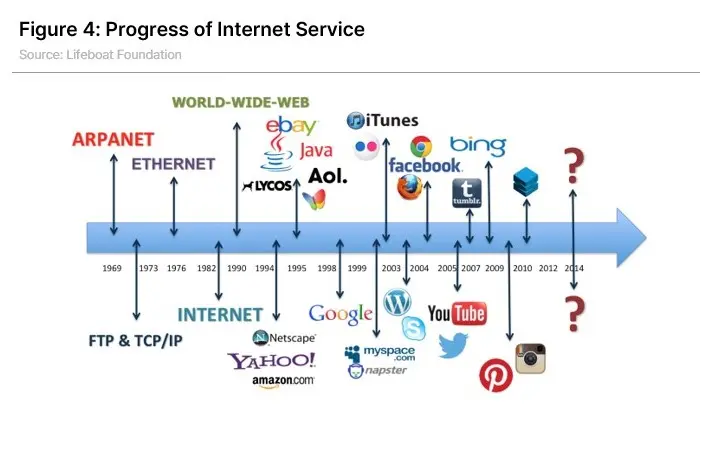

So, where could this “active adoption of use cases” come from? One of the long-term objectives for the crypto network is to become a network well-positioned within mundane everyday life with participation from most of the global population, much like the Internet. Despite the great progress they have already achieved, blockchain technology and Web3 services have not been able to reach mass adoption and serve a wide range of general users. While 16% of U.S. adults responded that they have ever invested in or used cryptocurrency, the number will likely drop further below if the question was about their experience in everyday usage of crypto, apart from trading for investment purposes. GMI, an investment research institute, compared the number of users of the Internet and cryptocurrencies and forecasted that the number of people using cryptocurrency will reach 1.2 billion by 2025 if we were to assume it would grow at the same rate as the internet users as recorded since 1992, the very early phase of the Internet. The GMI’s estimated cryptocurrency user population as of the end of 2021 is about 290 million, which is only 3.8% of the global population and one-fifteenth compared to the number of Internet users, which amounts to 56% of the global population. It means there is still a long way ahead if we were to follow the narrative that “the blockchain is the next internet.”

However, one important thing must be noted when embracing such a narrative (even if we put aside the credibility issue of the user estimation itself). Just as mass adoption of the Internet did not spontaneously take place on its own, the expansion of Web3 also requires clear and specific motivation and trigger to reach widespread penetration and adoption. In the 1990s and early 2000s, when the Internet adoption rate surged exponentially, the world witnessed the births of many brand-new innovative services and companies such as internet browsers (like Netscape and Internet Explorer), search engines (like Yahoo and Google), and e-commerce services (like eBay and Amazon). They served a pivotal role in the growth of the early Internet user population. Since then, the “World Wide Web” has scaled up to embrace various sectors into its fold, including social network services, cloud services, video content services, and smart devices, taking a firm hold on its position as the network connecting the entire globe. In other words, if we are to take a stab at assessing whether the blockchain network will be able to replace the conventional Internet or co-exist to grow together, we need to ask whether we have enough services provided by blockchain networks (such as asset transfer, DeFi, NFT, and P2E games) to lead the people to the world of Web3, just as e-mails and search engines once did for the Internet.

Scalability, a Prerequisite for General Adoption

Scott Clark, an IT columnist, presented the following as some of the bumps blocking the general users from adopting and accepting Web3.

- Information such as crypto wallets and addresses is difficult to manage

- Designs are unfamiliar and poorly made

- There are not enough compelling dApps available

- Existing brands are hesitant to switch their operations over to DAO

- Penetration and adoption of VR/AR devices are low

People who have used Web3 services before could easily think of many more areas of improvement, such as the transfer speed, transaction fees, and the inconvenience users face in transferring and exchanging assets (cross-chain, especially). In particular, the lack of dApps with adequate utilities and the issue of transfer speed and cost are two issues deeply intertwined with the scalability of blockchain networks. Scalability refers to the network’s capability to handle transaction volume increases. The lack of such scalability is one of the major causes bottlenecking the road to mass adoption.

③ Significance of Blockchain Scalability

Blockchain technology made it possible to create a new form of network that does not have to rely on a single entity to process data and deliver value. However, the distributed validation and recording process, in turn, limits the scalability of the blockchain networks compared to the conventional centralized networks. In terms of speed and cost, for example, Bitcoin’s blocks are generated every 10 minutes or so, and its speed is recorded at three to seven TPS (transaction per second), while the Ethereum network generates an average of an 80 kb-sized block every 12-14 seconds and processes about 15 transactions per second. In terms of cost, the average transaction fee per transaction on the Ethereum network is about USD 2-3 as of early August. Although considerably lower than the USD 40 at the beginning of the year, the price still acts as astumbling block for processing mass transactions. The volatility of the cost is also an issue. Since the price of transaction fees is determined by the supply and demand of the blocks, the gas prices for the entire network have always spiked whenever there was a surge in demand for the network usage, such as the rush caused by the CryptoKitties and the NFT boom.

In other words, since the block spaces where data is stored are also a resource with significant scarcity, much like the Internet traffic, the blockchain scalability improvements can be interpreted as the process of expanding its capacity to handle the demand to contain more data in such block spaces.

Will Layer 2 be the Key to Solving the Scalability Problem?

① Networks’ Attempts to Scale Up and the Constraints They Face

Making a faster and cheaper blockchain network is no easy task. If it were any regular database, its processing speed could be directly increased simply by adding more hardware, for example. However, since blockchain networks, by nature, need to be able to operate without the need to trust or rely on a specific entity, they come with a special set of limitations dubbed the “Blockchain Trilemma.”

The blockchain trilemma refers to the tradeoff relationship of the three components of blockchains: decentralization, security, and scalability. The generation of a block is confirmed upon the consensus of multiple distributed nodes. Assuming all the other conditions are the same, the more nodes there are, the more decentralized the network. However, that will cause redundancy in calculations and increase the time required for the nodes to reach a consensus (as shown in Figure 12). If the network tries to scale up by reducing the block generation time and increasing block capacity, it will face yet another problem: tighter constraints for the full node operation.

Under the umbrella of this tradeoff relationship, each blockchain network chooses its level of scalability that suits its purpose and target. This is similar to the “Constrained Optimization Problem” in economics. For example, to allow only those operators with high specifications to participate as validators, Solana has imposed the minimum requirements of having at least 128GB RAM and 2.8GHz CPU, enabling more transactions to be processed per unit time at the expense of a certain degree of decentralization.

However, the specific constraints of the trilemma are not set in stone. Our earlier discussion about the tradeoff relationship was based on the assumption that “all the other conditions are the same.” However, with technological advancements – such as further development in communication technologies, the optimization of the way consensus is reached, and the changes in the network design, such as sharding – the degree of scalability may improve gradually, despite the same level of security and decentralization. Even if the tradeoff relationship that formed the trilemma in the first place may remain, the restrictions it poses can be mitigated little by little to eventually reach a certain equilibrium that is much improved in a practical sense. As Michael Zochowski pointed out in his Medium post, the more pertinent question to ask here may be not “How does this network solve the scalability Trilemma?” but “How does this network scale practically?”

② Modular Blockchains

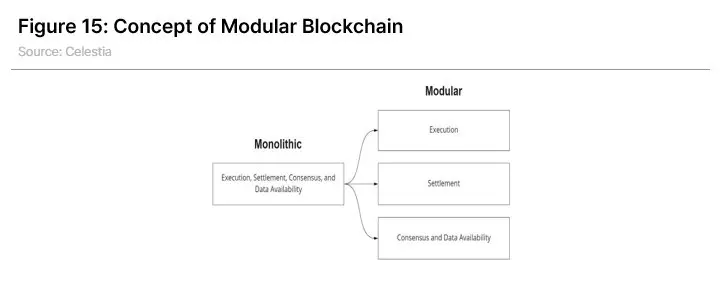

The attempts at scalability improvement mentioned earlier focused on improving the processing capabilities within a single blockchain. Others have sought to resolve the trilemma with a different approach, dividing up different functions of a single blockchain into various other chains.

In a nutshell, blockchains process their data in the following steps: A consensus of nodes, such as PoS (Proof-of-Stake) or PoW (Proof-of-Work), is used to check that the status of the blockchain is normal without any issues and to determine the order in which the transactions are to be processed; Then, the transactions are executed, updating the chain’s state data; The data about the transactions need to be distributed throughout the network so that the other nodes can check them at any given time, which is referred to as “data availability.” These elements mentioned above – execution, consensus, and data availability – are widely accepted as the three key functions of blockchain.

In general, a blockchain would process all these three functions on a single chain, in an approach referred to as a “monolithic blockchain.” Bitcoin, Ethereum, Solana, and many more mainnet chains fall into this category. On the other hand, a “modular blockchain” would farm out the functions of execution, consensus, and data availability to be processed on different chains in part or its entirety, allowing for better processing speed and efficiency.

③ Layer 2 Solutions

Moving the functionality of transaction execution to a separate chain is the most basic idea for improving transaction processing capabilities for modular chains. A blockchain can choose to parcel out one of its three key functions, the transaction execution, to be processed outside of its main chain; The remaining two functions, consensus and data availability, would be handled on the main chain. Such a method is named a Layer 2 (L2) solution because all the functions are divided into two different layers to be handled separately. The base chain, or the main chain, is called Layer 1 (L1), and the separate and additional chain executing the transaction is called Layer 2.

Let us take Ethereum as an example for a closer look. The conventional and monolithic approach would involve processing all the key functions right on the Ethereum network itself. With Layer 2, a separate chain would handle transaction executions, and Ethereum would be freed up to process only the consensus and posting of the transaction results. This means that the heavy load of calculations that had to be processed on Ethereum is greatly reduced while still being able to enjoy the security guarantees of the main chain since the consensus and record keeping are carried out on Ethereum as before. This also differs from another modular method: the sidechain. The sidechain approach is similar to Layer 2 in that it also executes the transaction on a separate chain. But they differ in that the consensuses for the transaction results on the sidechains are not made on the main chain but through the sidechain’s own mechanism. This allows for an upside of faster processing, but with a downside of possibly having more vulnerable security than the main chain.

Layer 2 solutions can be further sub-categorized by their execution format into rollups, plasmas, and validiums. The discussions in this report will mainly focus on the rollups as they are the most widely used.

④ Rollups

Rollups are one of the most representative Layer 2 solutions. Again, let us take an example of Ethereum to elaborate. Rollups execute transactions on a separate layer (L2) rather than on Ethereum (L1), only submitting the aggregates – the roll ups – of the transaction data and the status value of blocks changed by such transactions to Ethereum in a compressed batch form. This way, far more transactions can be put in the same given block space than if the transactions were executed directly on Ethereum.

During this process, the sequencer is tasked with batching various transaction data on the rollup chain and posting the results on L1. However, the transaction and block state data posted on the L1 chain by the sequencer must be verified for validity and accuracy. The two major methods used for such verifications are fraud proof, which assumes all the data to be valid until disputed otherwise via validation after the fact, and validity proof, which validates each data submission in advance. The optimistic rollup and the ZK rollup, the two most representative rollup approaches, each uses the former and the latter, respectively.

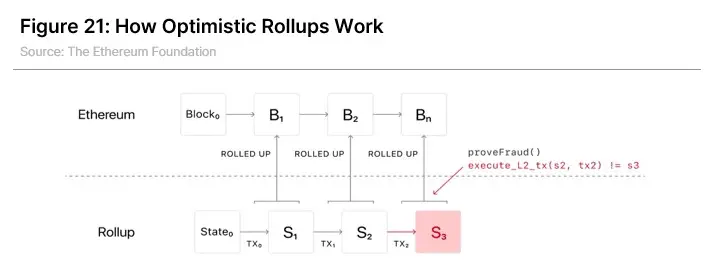

Optimistic Rollups

In the optimistic rollups, the sequencers freely submit the batches containing state data to the Ethereum network. However, verifiers can challenge the sequencers for making fraudulent postings, deliberately or negligently, before each posting is finalized on the Ethereum chain. In case the challenged transaction posting is found to be a fraud, the said batch is deleted and nullified, and the sequencer who posted it is penalized. As to allow for such a process, a transaction in optimistic rollups is normally followed by a 7 to 14-day challenge or dispute period, after which the posting is finalized and confirmed. This means it takes an additional 7 to 14 days to withdraw assets back to the L1 network after moving them onto L2 in optimistic rollups.

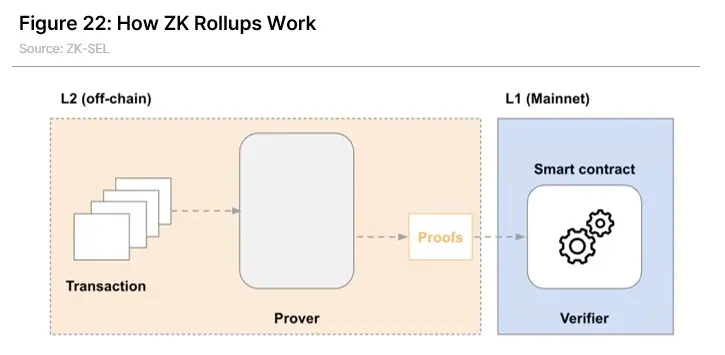

ZK (Zero-Knowledge) Rollups

ZK rollups use the zero-knowledge proof (ZKP) technology, which mathematically proves that a party knows, or has the knowledge of, an answer to a certain problem without revealing that answer itself. This encryption technology is used in a wide range of different fields, such as security and identification.

ZK rollups use the validity proof, which verifies the validity of the batch data submitted to L1 immediately upon a submission made. This process also requires the sequencer (“Prover” in Figure 22) to submit the various transaction and state data compressed into batches to L1. However, an additional piece of information is included in ZK rollups: a key generated using zero-knowledge to prove that the transaction has been made in a valid way in accordance with the rules. The batch submitted by the sequencer undergoes validity proof via the smart contract handling the verification (“Verifier” in Figure 22) before being posted and confirmed on the Ethereum network. This means that, unlike the optimistic rollups, the ZK rollups do not require a third party, a validator, and allow immediate asset withdrawals without any dispute periods.

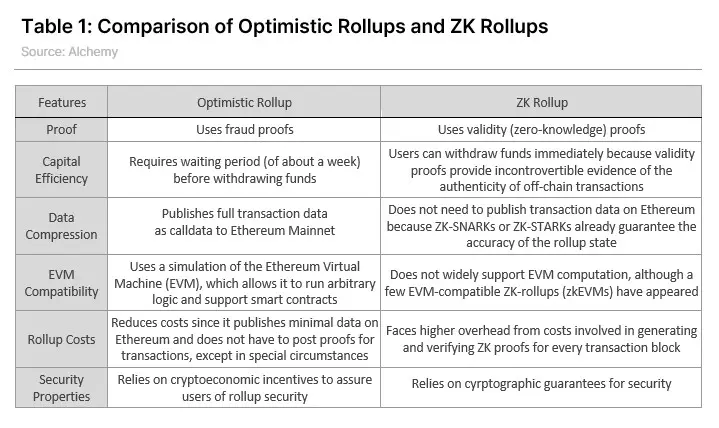

Optimistic Rollups vs. ZK Rollups

From the users’ standpoint, the most apparent distinction between these two types of rollups is whether they enforce a dispute period. When withdrawing assets moved from L1 to L2 via a bridge, Arbitrum and Optimism, the optimistic rollups, require users to wait out their 7-day challenge period. On the other hand, ZK rollups do not require any third party to verify the validity of their transactions and allow for asset withdrawals in a matter of minutes. Even if one were to use the optimistic rollup, the users could take advantage of services like Hop Protocol to get bridge tokens for a small service fee or utilize the rollup protocol’s own liquidity pool and enjoy quick asset withdrawals as if there were no such waiting period. However, since liquidity providers upfronting their tokens cannot be an answer to irreplaceable assets like NFTs, immediate withdrawals in optimistic rollups are still very much limited.

If you are seeking to make Ethereum smart contracts on a rollup chain, optimistic would be the rollup of your choice. ZK rollups require zero-knowledge proof that expresses transaction rules in terms of mathematical functions, thenencrypts them every time a batch is created on a rollup chain. However, turning non-standardized and general-purpose smart contracts into a function format that can be processed by ZK proof is highly difficult in the technological aspect. The fact that EVM (Ethereum Virtual Machine) initially did not take the ZK technology into its design consideration is also another roadblock for compatibility. On the other hand, with optimistic rollups, it is easy to operate EVMs and write and execute Ethereum smart contracts on the rollup chain. Arbitrum and Optimism provide EVM-compatible general-purpose solutions that can run various Ethereum dApps.

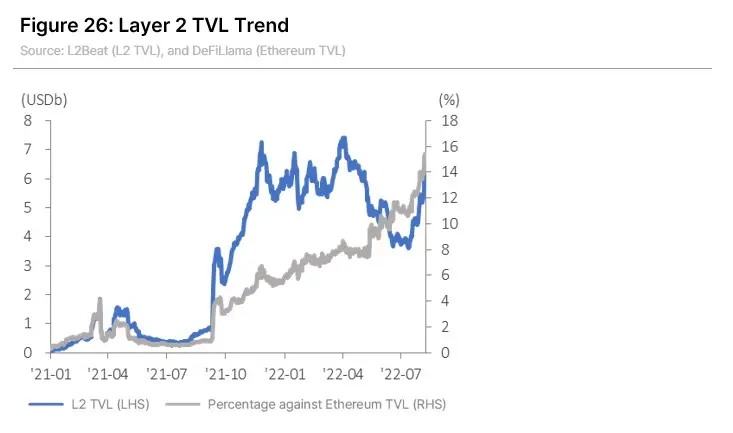

At the moment, optimistic rollups are leading the market with their offer of general-purpose solutions. Arbitrum and Optimism have claimed the top two positions in terms of Layer 2 protocol TVL rankings, with optimistic rollups taking up 80% of the market's total TVL as of early August.

ZK Rollups Supporting EVM

ZK rollups are actively engaged in developing EVM compatibility solutions to overcome the limitations mentioned above. With its version 2.0, zkSync plans to start offering a general-purpose solution that supports EVM compatibility. Last July, Polygon also revealed its EVM-compatible L2 solution, Polygon zkEVM. It is targeting the mainnet launch to take place in early 2023, upon which the users will easily be able to move their Ethereum smart contracts to Polygon zkEVM. Scroll has also launched its pre-alpha testnet in July, which is in development to allow the use of Ethereum smart contracts as-is without having to convert at the byte code level, and to generate ZK proofs. As many projects rush to launch EVM-compatible ZK rollups, it is expected to serve as the momentum to build up the rollup user base and fire up the competition for market share among protocols.

On the other hand, some predict that things may take a different turn if protocols were to cherry-pick only the advantages of each proof method as they evolve. For example, Optimism has stated that while it is working with the same premise that the ZK technology still has many areas of improvement in terms of EVM compatibility, its multi-client ecosystem ensures that the EVM equivalence can be supported on the Optimistic protocol by adding ZK technology and validity proof as clients.

How Much Scalability Improvement Can We Achieve with Rollups?

Rollups are able to increase scalability because they reduce the number of transactions and size of data to be processed on the main network by batching a large number of transactions. On a rollup chain, an ETH transfer only requires 12 bytes or fewer data to go on L1, which is close to a 10-fold save compared to if it were executed on Ethereum. Another reason to opt for rollup chains is that rollup chains that only handle code executions are relatively free from the constraints put on the speed and block size for the sake of decentralization and security on the main chain, such as Ethereum.

In terms of cost, Optimism and Arbitrum are enjoying about a 10-fold reduction, and zkSync, based on ZK-rollup, is enjoying about a 50-fold reduction compared to Ethereum when sending ETH as of August 7, 2022, according to the average gas price compiled by L2Fees. These figures change daily based on various factors, such as the level of network activities. Forecasts show that each rollup solution can theoretically reduce the transaction fees by nearly 100-folds compared to Ethereum if their activity level remains high and transactions are compressed efficiently.

As for the speed, Vitalik Buterin shared his view that the Ethereum network’s processing speed of about 15 TPS can be improved up to 4,807 TPS via rollup according to his calculations based on the batches’ data capacity and Ethereum’s gas limit. According to EthHub's estimation, the optimistic rollup solutions’ processing speed is approximately 200-2,000 TPM, with zkSync estimating its own speed to be over 2,000 TPS. After Ethereum’s sharding, to be further discussed later, the rollup chains’ scalability may be further improved.

Sequencer Centralization Issue

The sequencers, or operators, serve the role of executing transactions on the rollup chain and sending the state data for the said transaction to the main chain. However, the rollup solutions’ sequencers today are relatively centralized compared to the decentralized main chains like Ethereum. Many rollup solutions, such as Optimism, Arbitrum, and zkSync, rely on a sole sequencer specified or operated by the project for the convenience of development and operation, which has been pointed out as one of the challenges that the rollup method needs to overcome.

Does having centralized sequencers mean that the security of these rollup chains cannot be trusted? Not necessarily. Optimistic rollups use fault proof and ZK rollups use ZK proof to verify the sequencers’ behaviors and filter out any malicious acts. Even if the sequencers are centralized, additional validation mechanisms exist to maintain their trustworthiness. Given that a few preconditions are in place along with such additional validations, Buterin argued, anti-censorship can be secured if block validation is highly decentralized and trustless even if block generation were centralized. Moreover, many rollup protocols offer "escape hatches" that allow withdrawals of assets and smart contracts to Ethereum L1 without having to go through the sequencers in case of abnormal or suspicious transaction activities on rollup chains. With this feature, the risk of asset exploits from rollup chains is lower than from sidechains or bridges between L1 chains.

Even so, there are clear reasons why criticism continues about the sequencer centralization as a pain point that must ultimately be dealt with. It is because centralized sequencers may be vulnerable to various issues, such as network downtime, and open up doors for malicious actors to selectively post transactions or rearrange the order of executions. That is why L2 projects are aiming to ultimately decentralize sequencers, each devising its own countermeasures against malicious acts. Optimism plans to decentralize sequencers by 2023, and StarkNet also stated that its goal is to ultimately decentralize operators using its token as the medium.

Major Layer 2 Projects

① Optimistic Rollup Projects

Optimism and Arbitrum are the two most representative Ethereum-based Layer 2 protocols using optimistic rollup. According to L2Beat, these two protocols take up over 80% of the total Layer 2 protocols’ TVL. They are both optimistic rollup-based general-purpose protocols, enabling anyone to deposit their ETH to each protocol using bridges and to use dApps with improved scalability. As they provide higher compatibility with EVM, it is also easier for developers to launch and operate dApps. In particular, Optimism offers a development environment that is the same as EVM, even on the bytecode level (i.e., EVM equivalent). The small difference would be how they handle validations: Optimism validates the entire transactions in a batch where a challenge has been raised, while Arbitrum only validates the specific transactions in question.

Boba Network, another general-purpose L2 solution based on optimistic rollup, stepped into the limelight soon after its launch with competitively high returns on the DEX deposits. While it takes seven days for users to withdraw their assets moved to L2 back on Ethereum on Optimism and Arbitrum, Boba Network made it possible to withdraw assets without having to wait out any grace period by leveraging a fund raised by the liquidity providers. Metis uses its own method, Smart L2, which slightly differs from the other optimistic rollups. The goal is to enable DACs (Decentralized Autonomous Companies) to be easily formed and operated on the Metis Network. Its mainnet, Andromeda, launched in November 2021 and now has about 500 DACs.

② ZK Rollup Projects

zkSync

zkSync is an Ethereum-based ZK rollup solution developed by Matter Labs. zkSync 1.0 provided application-specific functions such as token swap, and zkSync 2.0, a general-purpose testnet, is currently in operation with a plan to launch its mainnet within 4Q this year. Version 2.0 supports EVM compatibility and makes it easy to deploy smart contracts written in Solidity. Users are able to enjoy almost the same dApp experience as they would on Ethereum.

zkSync 2.0 also comes with an added function called zkPorter, providing extra flexibility for data availability. Users can continue to post the part of the state data generated on L2 that requires stronger security measures on Ethereum L1 via ZK rollup to get data availability, or they can choose to put their data availability in the hands of zkPorter accounts, independent from Ethereum, in case they opt for a moderate level of security at an affordable fee. The data availability of zkPorter accounts is secured by having decentralized nodes called “Guardians” participate in the validation process. The project claims that the fee for zkPorter accounts will save close to an additional 100-fold compared to zkRollups, with the load processed on the Ethereum chain reduced.

Going forward, zkSync plans to issue its native tokens. The token’s utility is expected to be for the operation of zkPorter, with nodes staking the token via PoS to participate in the validation process as Guardians and getting slashed if they have not carried out their role as validators.

StarkNet

StarkNet is a general-purpose ZK rollup solution developed by StarkWare, opting to use ZK-STARK approach rather than ZK-SNARK. StarkWare had already launched the StarkEx service using ZK-STARK in 2020. StarkEx is a scalability improvement engine that provides ZK-proof generation upon transaction execution specific to each application. It is mostly used by DEXs and gaming-related projects such as dYdX, Sorare, and Immutable X. StarkNet, launched in 2022, is a general-purpose solution that allows projects to form and operate general-purpose smart contracts on StarkNet. In addition, StarkNet uses Cairo, StarkNet’s own language specialized in implementing ZK-STARK, when writing smart contracts. Although it does not yet support EVM compatibility, Warp, a service to transpile Solidity codes into Cairo, is currently in development. Last July, StarkNet announced its plans to release its native token to promote more activity in the developer ecosystem and advance decentralization.

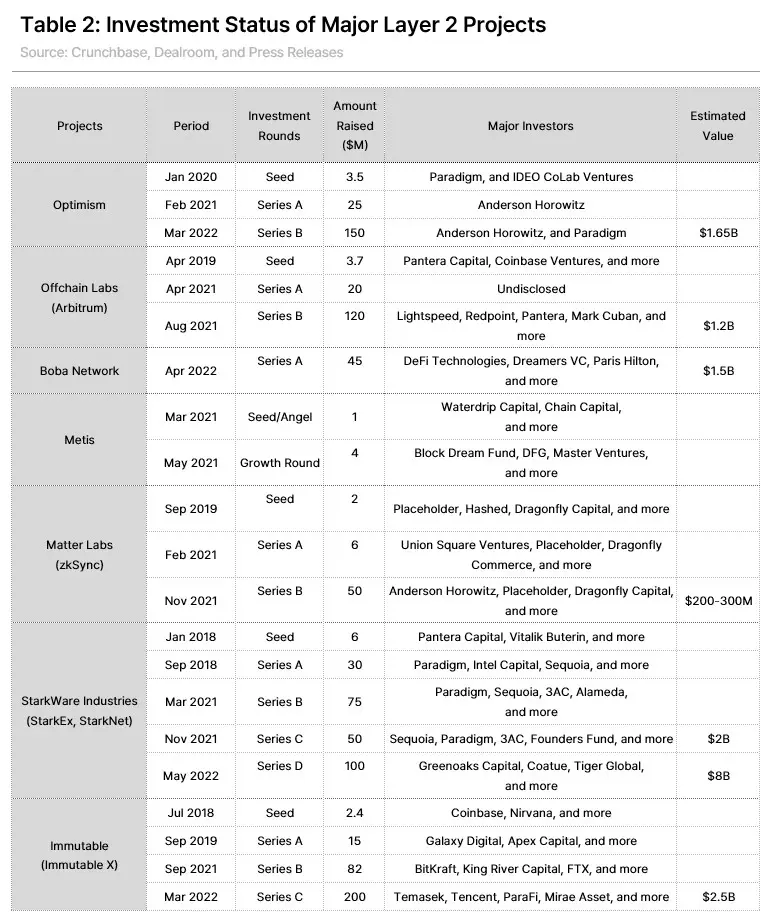

So far, StarkWare Industries, a developer, has received over USD 260 million from various investors, including Paradigm and Sequoia, and was valued at USD 8 billion during its Series D funding round.

③ Polygon and Its SaaS (Scaling-as-a-Service) Strategy

With so many formidable contenders out there, what counts as the best Layer 2 solution will surely differ by the characteristics and requirements of each project. For example, while protocols providing DeFi secured loans may value security over speed, services for real-time games may have greater demand for high speed and low fees than for security. Polygon Network offers various scalability improvement solutions users and projects can choose from along with its sidechain, Polygon PoS. "ZK Verse" is one of those solutions and includes various Layer 2 solutions using ZK rollups as below:

- Polygon Zero: A solution focused on fast processing speed, using both ZK rollup and validium. Its strength lies in being able to minimize the time and capacity needed to generate ZK proofs.

- Polygon Hermez: A solution with notably strong decentralization. Using its native token, it uses not a single sequencer but a group of coordinators serving the role of the sequencer. Ethereum-compatible zkEVM is to be launched.

- Polygon Miden: A general-purpose ZK rollup network using ZK-STARK. It plans to provide support for its native virtual machine, Miden VM, that automatically generates ZK proof upon executing smart contracts.

- Polygon Nightfall: A solution fit for corporates that emphasize the protection of privacy. Strengthening privacy with ZK technology on top of optimistic rollup, this hybrid approach is co-developed with EY and allows for KYC compliance and privacy protection via ZK cryptography, even on the Ethereum network. Mainnet beta is currently running, with the mainnet launch targeted for the latter half of this year.

As presented, Polygon enables users to run various types of modular blockchains on top of Ethereum as the settlement layer to help further improve scalability. Its strategy is to allow a wide variety of users to pick and choose the solutions that best fit their needs. As also can be observed in the strategies of other major Layer 2 solutions discussed earlier, scalability improvement solutions tend to favor taking full advantage of the modular scheme and flexibly deploying different functions of blockchain to on- and off-chain, breaking free from the limits of specific methods. Through such an approach, developers and users are allowed to select and combine the solutions they need in accordance with the scalability and security for their own usage and purpose.

Layer 2 as an Investment Vehicle

Layer 2 projects look promising as the next investment vehicle for the following reasons.

- As the number of people using blockchain networks increases, the demand for scalability improvement will also see structural growth as well.

- Technological advancement is progressing in a more user-friendly direction, such as ZK rollups with EVM support.

- The Ethereum Merge expected to take place this September, and the subsequent changes, will add momentum to the growing demand for Ethereum’s Layer 2 protocols.

- More and more Layer 2 protocols are following suit and starting to issue tokens, adding to the number of investment alternatives available.

As the crypto market shows signs of recovery in the second half of the year, the soon-to-merge Ethereum and Layer 2-related assets (especially the ones related to the general-purpose protocols) are showing an upward trend above the market return.

① Layer 2 Projects Issuing Tokens

The fact is that investors had been given only limited choices for their investment even if they wished to invest in Layer 2 projects and their growth since major Layer 2 protocols did not issue any tokens until very recently. There had been some tokens issued by application-specific services and a few general-purpose services like Loopring (LRC), Immutable X (IMX), and Boba Token (BOBA). However, major general-purpose solutions like Optimism, Arbitrum, and StarkNet had no specific plans detailed out for issuing tokens, even as recently as early this year.

However, as major general-purpose projects shift their strategic direction lately, the interest in the Layer 2 sector overall is also heightened. Last June, Optimism issued its native token, OP, airdropping 5% of the supply to its ecosystem participants. zkSync is about to issue its own token for zkPorter operation after the launch of its version 2.0, and StarkNet also revealed its plans to issue its native token last July. While Arbitrum has not yet officially announced its token issuance, it held an NFT airdrop event named the “Arbitrum Odyssey” (which has been tentatively suspended due to technical issues) for its ecosystem participants, firing up the anticipation and excitement for its native token. All in all, the market is now enriched with various alternatives for investors seeking to invest in the growth of Layer 2 solutions.

② The Need and Value of Layer 2 Tokens

The absence of tokens issued by major L2 projects thus far could be the counterevidence that token issuance had not been essential to the workings of protocols. In particular, most projects had no reason nor need to provide token incentives since they have relied on centralized sequencers. Optimism had even gone as far as to deny any possibility of issuing its native token in an interview early this year. Against this backdrop, investors need to remember to consider the functions and value of such native tokens when considering an investment in Layer 2 tokens, as with investments in any other assets.

We could consider the following as why Layer 2 projects would issue tokens.

- Marketing expenses for attracting users and bootstrapping

- A solution for the sequencer (or operator) centralization issue

- Incentives for ecosystem participants

For further discussion, let us apply these to the cases of Optimism and StarkNet, which have recently released their native tokens.

Decentralization and Role of Tokens

Optimism’s Governance Experiment

Optimism reached an over three-fold growth in TVL since issuing its token, OP. This demonstrates that the marketing impact of token issuance is valid and effective. However, OP tokens are only used in governance participation, with fees on Optimism still paid in ETH. From the investors’ point of view, it may be more difficult to clarify the relevance between the growth of the protocol and the value of such tokens, compared to other tokens with other utilities or tokens that entitle their holders to fee dividends proportionate to the transaction size.

For now, it can be said that the OP tokens hold more significance as a sign of the power to make decisions on Optimism’s new governance model (much like the UNI token of Uniswap today). The value of the community as a whole can be expected to go up if and when the protocol attains growth that is accompanied by a successful establishment of its governance model.

Optimism’s governance is in a unique two-house structure. The “Optimism Collective,” Optimism’ governance body, is comprised of two different houses: the Token House and the Citizens’ House. Token House members make decisions on normal protocol operations by casting votes based on the number of OP tokens they hold. On the other hand, the soon-to-be-formed Citizens’ House will make decisions contributing toward the public good for the whole community, such as funding distribution for the public goods. It will use a voting mechanism with each member’s citizenship granted in the form of non-transferable soulbound tokens, giving one vote per account for the community contributors.

StarkNet and Its Developer-Centric Strategy

StarkNet stated that its vision is to ultimately become a truly public infrastructure like the Internet, declaring decentralization and a developer-friendly ecosystem as its immediate challenges to tackle in pursuing such a goal. Likewise, its recently announced token focuses on encouraging and promoting activities of the network users, operators, and developers, on top of the governance participation functionality.

Once StarkNet starts issuing its own token, the network will accept the StarkNet tokens, not ETH, for its fees. It will provide the network users with token utility. The network aims to decentralize its operation by 2023 by utilizing various means, such as getting operators to stake assets and providing token incentives for their activities.

The network will offer a much stronger incentive for developers. The developers will automatically be allocated a portion of fees generated by smart contracts they have created on StarkNet, with additional allocations given to the core developers. The developers will get more rewards as their contributions further scale the ecosystem’s economy and transaction volume. This can be understood as StarkNet’s effort and strategy to bring in as many developers as possible into its ecosystem, despite the high entry barrier due to technical difficulty for ZK-STARK as it uses StarkNet’s own programming language, Cairo.

Forecasts and Issues

① Ethereum Upgrade and Rollup-Centric Blueprint

Most Layer 2 protocols today are based on the Ethereum chain, which is expecting a colossal change ahead: the switch to PoS. This upgrade – also called the “Ethereum 2.0” – will take place in the following steps: (Please refer to the April 2022 Korbit report for further details.) (*Please note that this report was originally published in August 2022, prior to the Merge.)

- Beacon chain, the PoS-based consensus layer, to be launched: Completed in December 2020

- Ethereum chain to switch to PoS via a “Merge” of the beacon chain and existing Ethereum chain: by 2022

- Shard chain to be introduced: by 2023

The essential part of this Ethereum upgrade is the “merge” that would fuse together the execution layer and the consensus layer as one chain. Despite the repeated postponements, Ethereum’s merge is showing significant progress as time passes. The merge of the Sepolia testnet was completed early this July, and the last testnet, Goerali, was also merged recently. The Ethereum Foundation presented in its roadmap that it aims to complete the merge within 2022 and launch the shard chain by 2023. Although subject to change, participating developers expect the merge to take place in mid-September.

Sharding and Rollups

Sharding is a method of storing and managing databases by splitting them into many small pieces. In the same manner, blockchains can be split into many shard chains so that the nodes can store data in each shard parallelly. It reduces the network’s throughput load as well as the burden for storing the data for the entire chain for full node operations, resulting in greater scalability and decentralization of the blockchains. After the merge, Ethereum will initially be divided into 64 shard chains, or possibly more down the road. It will serve as momentum for Ethereum to greatly improve its scalability at the Layer 1 level.

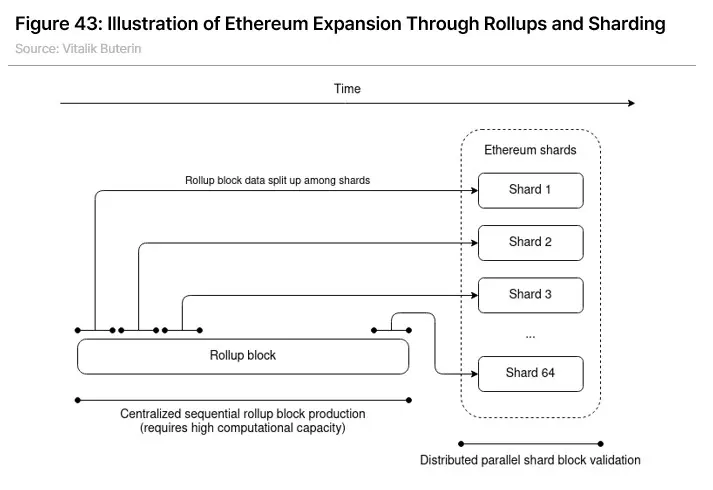

What would be the role of the rollups once sharding is introduced? According to Ethereum’s long-term blueprint, the need for Layer 2 is likely to grow even larger as it forms a mutually complementary relationship with sharding. One of the reasons behind such a forecast is that no single solution would be able to fully resolve the scalability issue. After the Ethereum sharding, beacon chains will allocate nodes to each shard, and the nodes will only validate data on their designated shards. Since shards may become too vulnerable to attacks if there are too few nodes in each shard, there is a limit to the number of shards blockchains can add. If a blockchain is formed as a modular chain with rollups and sharding combined, the throughput on Ethereum would be reduced since the transactions are executed and compressed on the rollup chain, and the data stored on Ethereum would be distributed among multiple shard chains. It would enable a much greater scalability improvement than if only a single solution were used (See Figure 43).

In his 2020 article titled "A Rollup-Centric Ethereum Roadmap," Buterin shared his analysis that the processing speed of Ethereum would increase from today’s 15 TPS to 3,000 TPS if all its transactions were handled on rollup chains. He went even further to state that Ethereum could theoretically process up to 100,000 TPS if the data storage were to be migrated from rollup chain to shard chains after the sharding. There are even more optimistic predictions that consider the number of shards to increase further. Polynya, a blockchain analyst, estimated that the Ethereum network would be able to reach 1.382 million TPS, assuming Ethereum is split into 1,024 shards, the data size per block is raised to 2.48 MB, and data per transaction is compressed to 16 bytes with rollups by 2030.

Of course, we need to keep in mind that the TPS numbers may vary widely depending on the type of transactions processed and that the theoretical calculations are not the same as the actual performance. However, given the future direction Ethereum aims to pursue, the modular blockchain combined with rollups and sharding may be the answer that will enable the mass-adoption level scalability for Ethereum to come true. Therefore, the Merge expected to be completed in September, as well as the shard chain launch to be followed, will attract even more attention to Layer 2 solutions.

② Future of Blockchain Ecosystem and Role of Layer 2

The Position of the Ethereum Ecosystem

Currently, over 90% of NFT trade volume takes place on Ethereum. Although Ethereum’s TVL share had once dipped as low as 50%, it bounced back after the Terra mayhem and is maintaining its position at around 60%, according to DeFiLlama. Following its long-term vision, Ethereum plans to transform into a modular structure with rollups and sharding after the Merge and make continuous upgrades such as introducing the Verkle trees and reducing the volume of data required to be stored. As such, Ethereum is expected to reach even greater scalability, followed by the Ethereum network’s position and market share in the blockchain ecosystem expanding further with such improvements.

Layer 2 Issues and Challenges

Certain challenges must be overcome to bring such forecasts into reality. First, the Ethereum upgrade needs to go live without a hitch, and continuous technological improvements should be made on other Layer 2 solutions, such as rollups, along with it.

In an effort to increase demand for its ecosystem, Arbitrum held an NFT airdrop event titled the Arbitrum Odyssey. However, when a rush of users crowed on the network to participate in this event all at once, it caused Arbitrum’s gas fee to skyrocket even higher than that of the Ethereum main chain. Arbitrum announced that it would hit pause on the event and resume after the Nitro update. This example clearly demonstrates that stably operating scalability improvement solutions in the real world is no easy task. Moreover, more improvements also need to be made to solve other challenges limiting users' convenience, such as the difficulty in exchanging assets between Layer 2s and ZK rollup's lack of EVM compatibility.

User-Centric Development and the Role of Layer 2

Forecasts also predict that the Layer 1 smart contract platforms, including Ethereum, will also head in the direction of providing a platform that fits each project and user's demands as they continue to grow and develop. Projects need to make various judgment calls and decisions when choosing the mainnet for their launches: the tested-and-proven Ethereum, other L1 chains with less transaction cost and the right fit for the project's specifics, or building the project’s own chain altogether. Ethereum is leveraging Layer 2 and other scalability solutions to resolve the issues of cost and speed that had continuously been pointed out as its shortcomings, and other L1 chains are seeking to make up for their lack of network effect by using bridges and interoperability solutions to share assets and liquidity among multiple chains, providing de facto network effect.

However, Ethereum is likely to continue to enjoy its status in the blockchain economy as the mainstream even if various developments in the ecosystem mentioned above result in a future where multiple chains co-exist. One of the reasons for such a view is that the bridges are still exposed to security vulnerabilities despite the great technological strides made for inter-chain connections. According to a blockchain analytics firm, Elliptic, cross-chain bridge hacks have accounted for more than USD 1 billion in stolen funds in the first half of this year alone. As evident in the Ronin bridge hack (that cost USD 620 million) and the recent Nomad bridge hack (that cost USD 190 million), as the ecosystem develops and transaction volume increases, the cost of security failures also grows. Paradoxically, this further highlights the demand for Ethereum’s decentralization and security. The scalability improvements attained with rollups and other solutions hold significance only when guaranteed with the strong security of the main chain.

Ethereum is getting geared up with more and more means to sufficiently fulfill various demands from different projects as a modular-based chain, just like in the case of Polygon, with efforts to provide each project with various choices fitting their demand using various scalability solutions.

“The ecosystem with Ethereum at the center” and “the ecosystem where multiple chains co-exist” both require quantitative and qualitative growth of the blockchain network itself. As progress continues for the modular blockchains’ vision toward mass adoption, the limelight will stay on Layer 2 and scalability solutions that play vital roles in that vision.

→ Click here to read the full report in Korean.