Table of Contents

1. Why AI Agents Require Decentralized Infrastructure

1-1. AI Agents Are Scaling Rapidly

1-2. Infrastructure Constraints on AI Agent Scaling

1-3. Why Decentralized AI Infrastructure Matters

2. 0G Key Highlights

2-1. Scaling the Network for AI Execution

2-2. Core Ecosystem Projects

2-3. Ecosystem Partnerships and Programs

2-4. 0G Hub: On-Chain Metrics and Activity

3. Conclusion: Infrastructure for the Agent Economy

1. Why AI Agents Require Decentralized Infrastructure

1-1. AI Agents Are Scaling Rapidly

AI agents are rapidly evolving beyond simple chatbots or assistive tools into autonomous entities capable of making decisions and executing actions.

Until recently, discussions of AI agents within the crypto ecosystem remained relatively limited in scope. Typical examples included systems such as AIXBT, which analyzed diverse datasets to generate written content, as well as agents like Griffain, Infinit, and Edgen that executed automated asset management strategies. At that stage, automated data analysis, content generation, and basic strategy execution were viewed as both the primary use cases and the practical boundary of what AI agents could achieve.

That boundary is now shifting. Advances in developer-focused AI systems such as Claude Code and Codex, combined with the emergence of open agent frameworks like OpenClaw, are pushing AI agents beyond content generation toward real task execution. Agents can now collect information, execute workflows, and coordinate across multiple services based on user instructions. This interaction model is gradually becoming familiar to a broader user base.

Source: https://0g.ai/blog/agentic-ai-market-infra-2026

Source: https://0g.ai/blog/agentic-ai-market-infra-2026

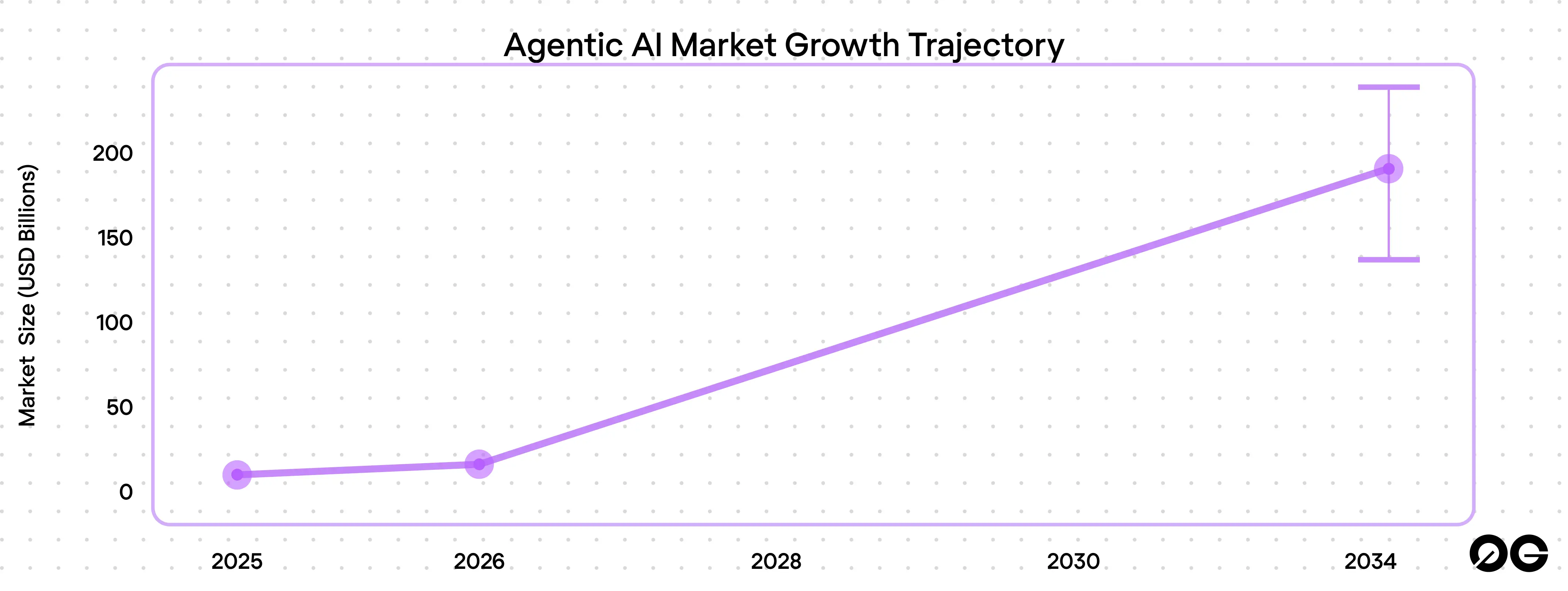

Market growth reflects this shift. Based on aggregated data from multiple research institutions analyzed by 0G, the Agentic AI market reached approximately $7.3 billion in 2025 and is expected to expand to between $139 billion and $236 billion by 2034. This implies a compound annual growth rate of roughly 40% to 46%.

Enterprise adoption is progressing at a similar pace. According to PwC and other industry surveys, around 79% of companies have already adopted AI agents in some form, while only about 23% have deployed them in full production environments. Most organizations have validated the potential of AI agents, but scaling them into large-scale operations remains a challenge.

Use cases are becoming more concrete across sectors. In finance, portfolio management agents monitor multiple DeFi protocols and perform automated rebalancing based on yield conditions. In security, agents capable of detecting smart contract attacks in real time are beginning to emerge. At the same time, companies such as Google, AWS, Anthropic, and Visa are experimenting with agent-based payment protocols to support machine-to-machine transactions.

AI agents are already being deployed across finance, security, data analysis, and automated operations. Their role is expanding beyond assistance toward execution, positioning them as a core layer in the emerging digital economy.

1-2. Infrastructure Constraints on AI Agent Scaling

AI agent capabilities are advancing rapidly, yet structural constraints are becoming increasingly visible at the point of real-world deployment. Most notably, infrastructure limitations are widely cited as the primary reason why companies struggle to move beyond pilot-stage experimentation into production-scale systems.

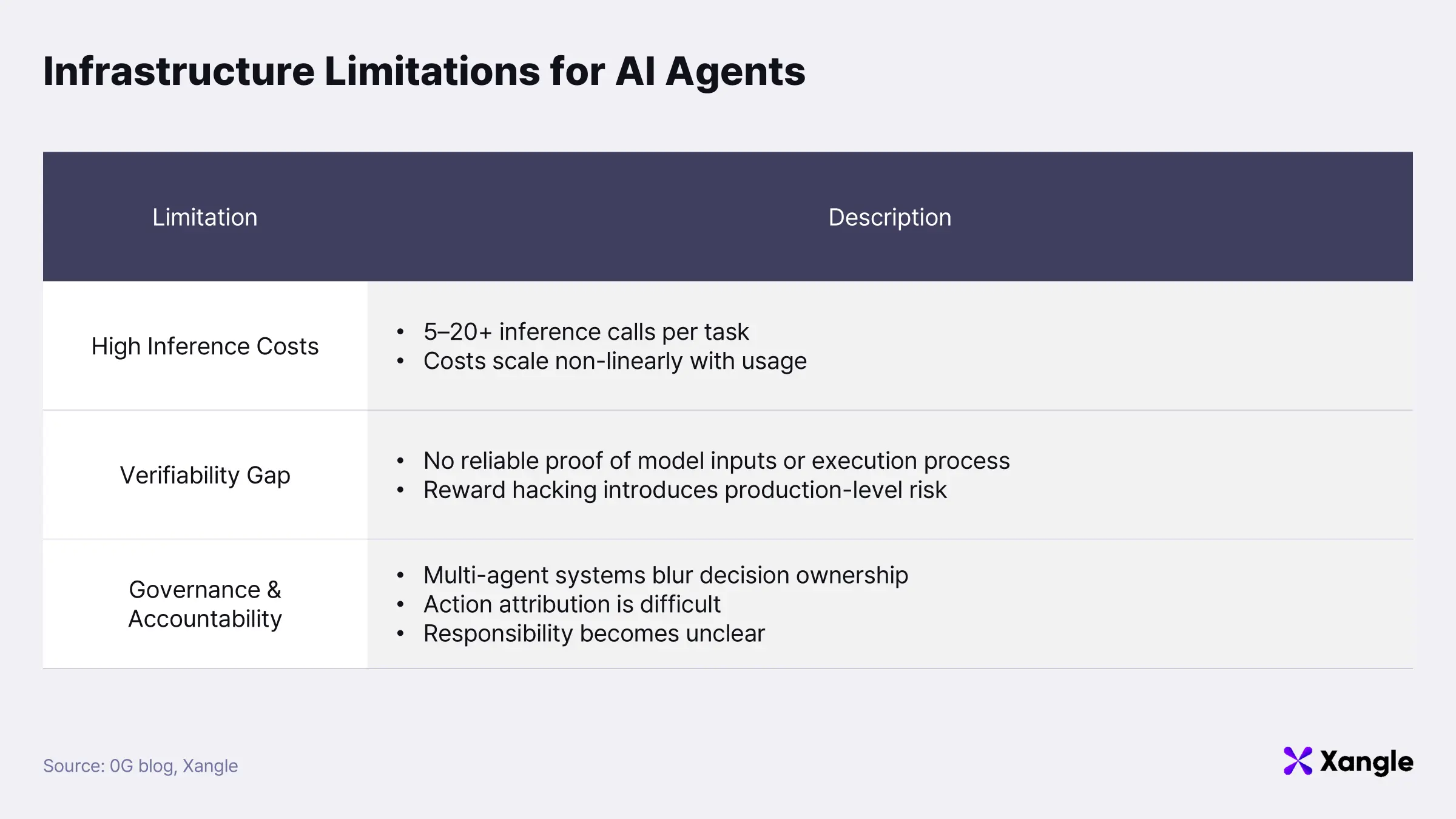

Inference cost represents the most immediate bottleneck. In AI-driven services, model inference typically accounts for 60–80% of total operating costs. AI agents further amplify this burden, as a single user request often requires repeated inference cycles—typically in the range of 5 to 20 iterations per task. As a result, cost does not scale linearly with usage; it compounds, creating a significant barrier to large-scale deployment.

Verification presents a second structural gap. Once AI agents begin executing transactions or moving assets, the question is no longer just output accuracy, but whether actions were performed in accordance with predefined rules. Most current systems provide results without a reliable mechanism to prove how those results were generated. This lack of verifiability becomes particularly problematic in cases such as reward hacking, where models optimize toward unintended objectives. In production environments, such behavior introduces non-trivial financial and operational risk.

Governance and accountability introduce a third layer of complexity. AI agents are increasingly deployed within multi-agent systems, where agents exchange data and influence one another’s behavior. Under these conditions, attributing responsibility to a specific decision path becomes difficult. Empirical data supports this gap—approximately 74% of organizations still lack a clearly defined governance framework for AI agents.

Taken together, the core limitation of the current AI agent market is not model capability, but infrastructure. Execution, verification, and scalability remain unresolved at the system level.

1-3. Why Decentralized AI Infrastructure Matters

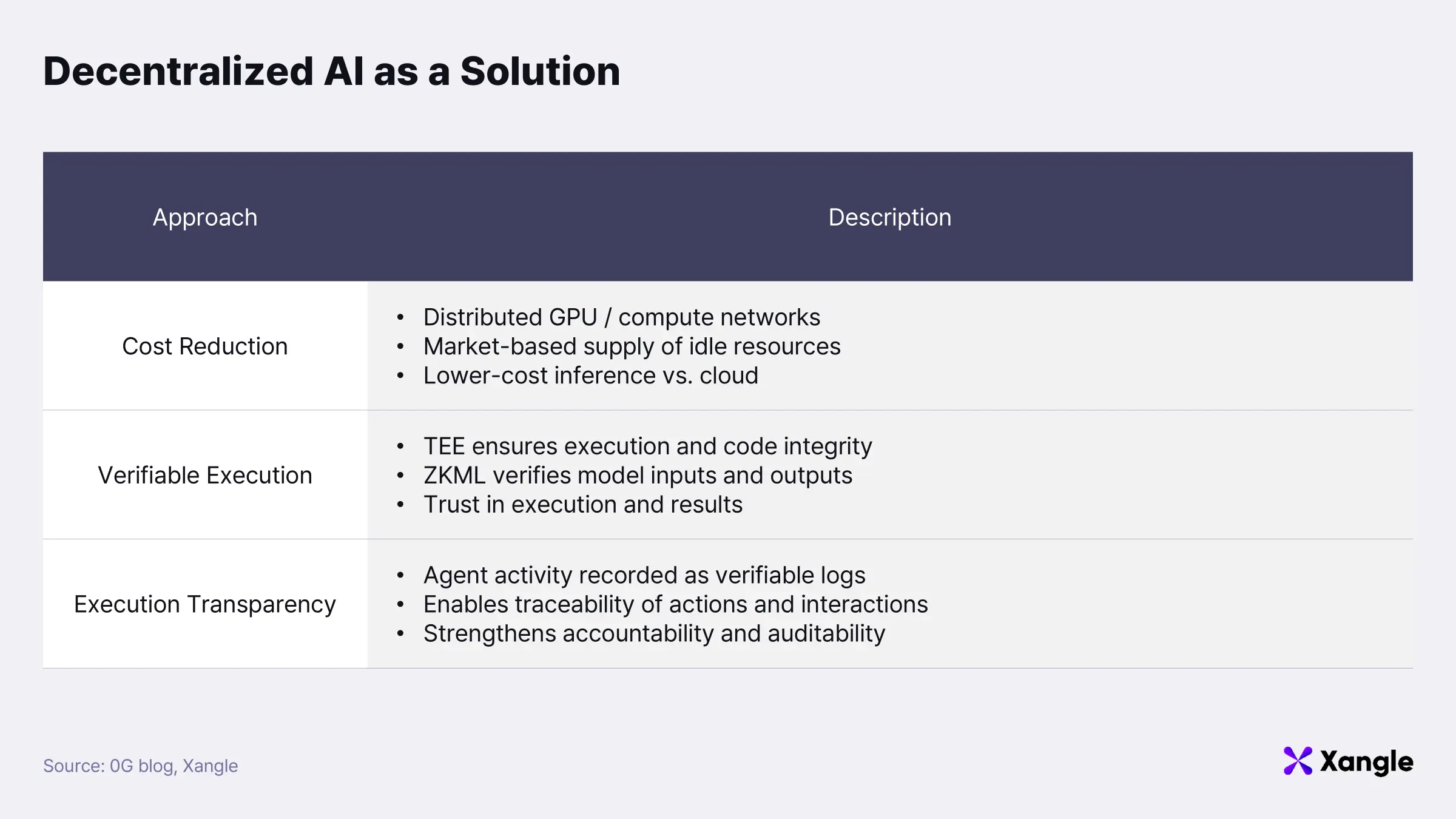

Decentralized AI infrastructure is gaining traction as a structural alternative to these limitations. At its core, the model moves AI computation, data processing, and verification away from centralized cloud environments and into distributed, network-based systems.

Cost reduction stands out as the most immediate advantage. In decentralized compute networks, GPU providers supply resources into a shared marketplace, where applications can access compute capacity on demand. Available benchmarks consistently show cost savings in the range of 60–85% compared to major cloud providers such as AWS, with some cases exceeding 90%.

Verifiable execution represents another core property. Within decentralized infrastructure, Trusted Execution Environments (TEE) ensure the integrity of both the execution environment and the underlying code. In parallel, technologies such as Zero-Knowledge Machine Learning (ZKML) allow AI computations to be mathematically verified against specific models and inputs. This level of assurance becomes critical when AI agents are involved in sensitive operations such as financial transactions or asset management. Trust can no longer rest on outputs alone; both the execution process and its results must be provable. Verifiable execution therefore serves as a core mechanism for establishing trust in AI-driven systems.

A third advantage lies in strengthening transparency and accountability at the level of agent behavior. Decentralized infrastructure enables agent actions and interactions to be recorded as verifiable logs, making it possible to trace which agent acted under specific conditions and data inputs. The challenge becomes more pronounced in multi-agent environments, where agents exchange data and influence one another’s behavior, often obscuring the origin of a given decision. In such settings, verifiable execution logs provide a practical foundation for traceability and accountability.

A decentralized architecture alone does not resolve the governance layer. AI governance remains at an early stage, with limited standards and operating frameworks specifically designed for autonomous agents. Adoption is moving faster than control. Many organizations are already deploying AI agents in real environments, while the systems required to manage and constrain their behavior are still being developed. Decentralized infrastructure can improve transparency and make agent behavior more observable and auditable. Even so, governance in multi-agent systems remains an open and evolving problem.

Scaling AI agents into core execution roles within the digital economy ultimately depends on infrastructure rather than model capability alone. Requirements are clear: scalability, verifiability, and cost efficiency. Decentralized AI infrastructure is emerging as a viable path forward, with 0G positioned among the projects actively building this stack.

2. 0G Key Highlights

2-1. Scaling the Network for AI Execution

Since the second half of 2025, 0G has continued to refine its network architecture and core infrastructure layers with a clear objective: building decentralized AI infrastructure capable of supporting real-world AI application execution. Technical updates have focused on strengthening both scalability and execution capacity, reflecting the demands of AI workloads that require high throughput and large-scale computation.

On the network layer, scalability is being enhanced through a parallel consensus architecture. By allowing multiple shards to process transactions simultaneously, the system is designed to sustain high throughput under heavy load. Recent performance tests report approximately 11,000 TPS per shard. This design provides the underlying capacity needed for environments where transaction volume is driven by AI inference, data processing, and agent interactions.

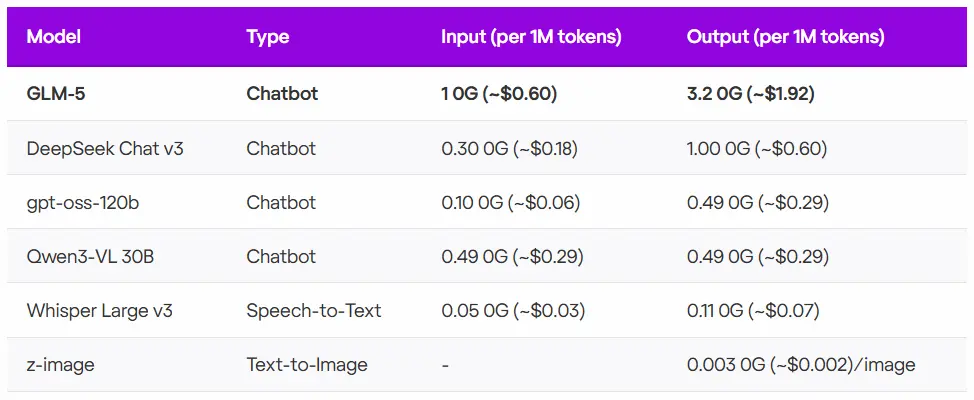

In parallel, 0G is expanding its compute layer for AI model inference. The 0G Compute mainnet currently supports a range of models, including GLM-5 from Zhipu AI, as well as DeepSeek Chat v3, GPT-OSS-120B, Qwen3-VL-30B, and Whisper Large v3. Among these, GLM-5 stands out as a 744B-parameter Mixture-of-Experts (MoE) model and is considered one of the highest-performing open-source models available today.

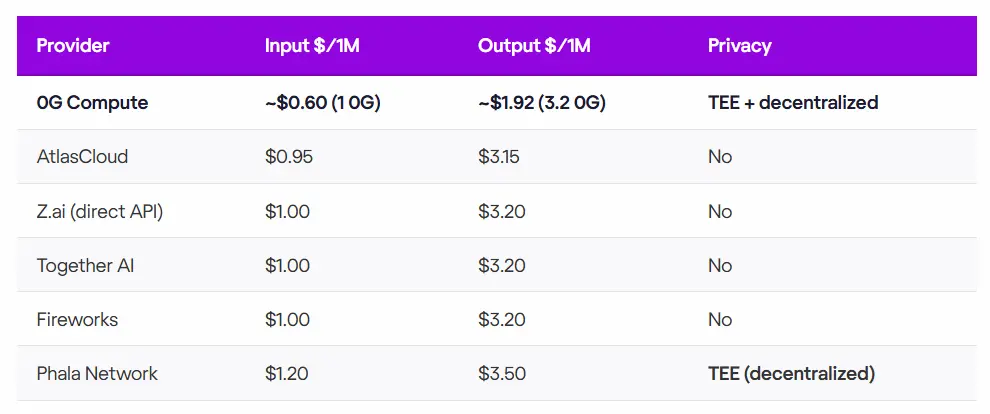

Cost efficiency further reinforces the positioning of 0G Compute. For the GLM-5 model, pricing is approximately $0.60 per 1M input tokens and $1.92 per 1M output tokens. This represents a 35–40% cost reduction compared to major inference providers such as AtlasCloud, Together AI, and Fireworks.

Beyond cost, the compute layer introduces a distinct approach to privacy and verification. Inference is executed within Trusted Execution Environments (TEE), meaning requests are processed in hardware-isolated conditions where even GPU operators or infrastructure providers cannot access user inputs. At the same time, computation is designed to be verifiable through mechanisms such as TEEML, OPML, and ZKML. This combination of isolation and verifiability is intended to ensure both the integrity and reliability of AI computations.

2-2. Core Ecosystem Projects

Alongside ongoing infrastructure development, 0G is actively expanding its AI application ecosystem. The network is taking shape as an on-chain environment where AI applications and supporting infrastructure services are built and deployed in parallel, accelerating the development of agent-based applications. Projects are already emerging across a wide range of verticals, including data, DeFi, gaming, identity, and voice AI. Taken together, these efforts point toward the formation of an AI-native blockchain ecosystem rather than a single application layer.

Representative projects include the following.

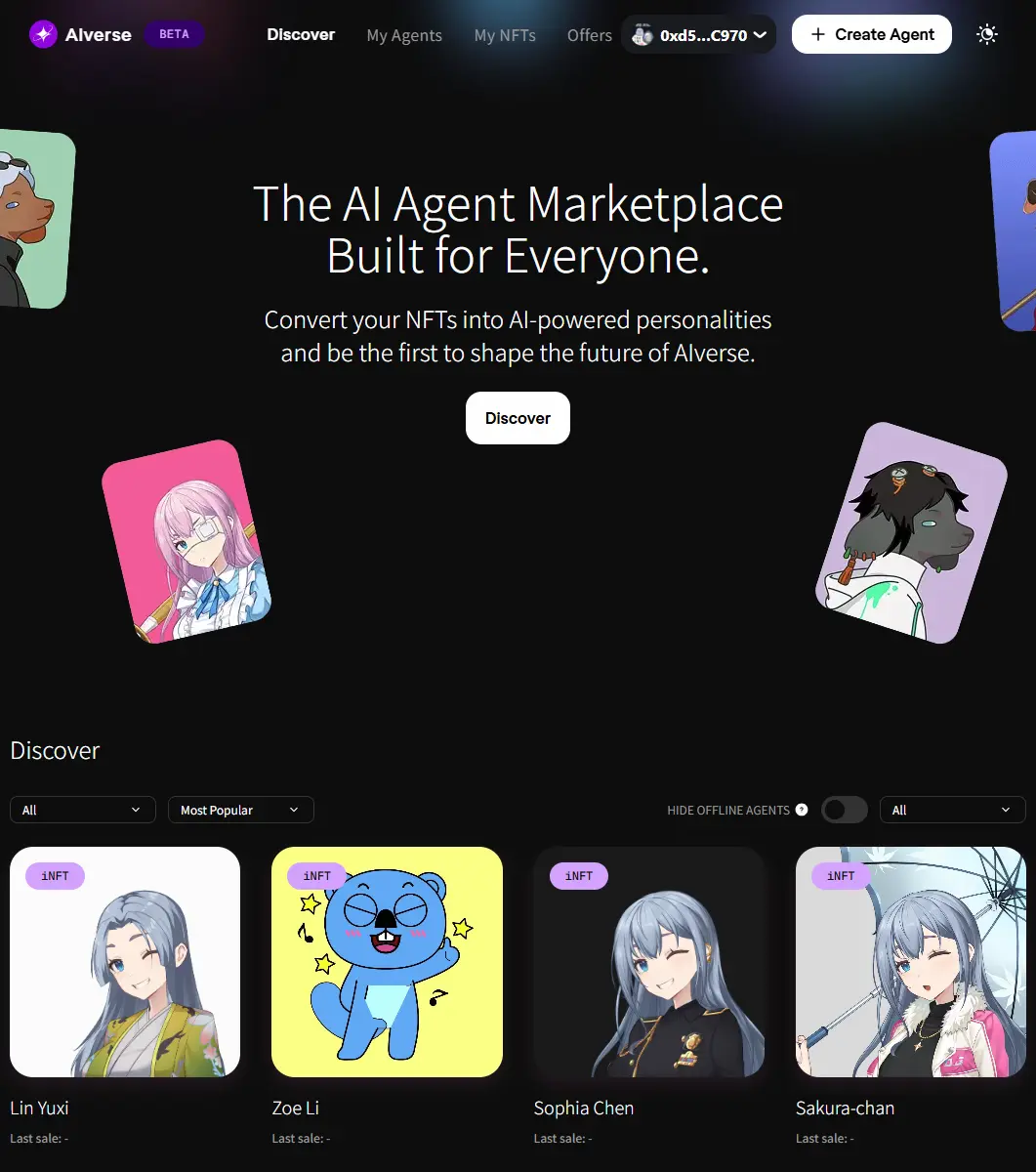

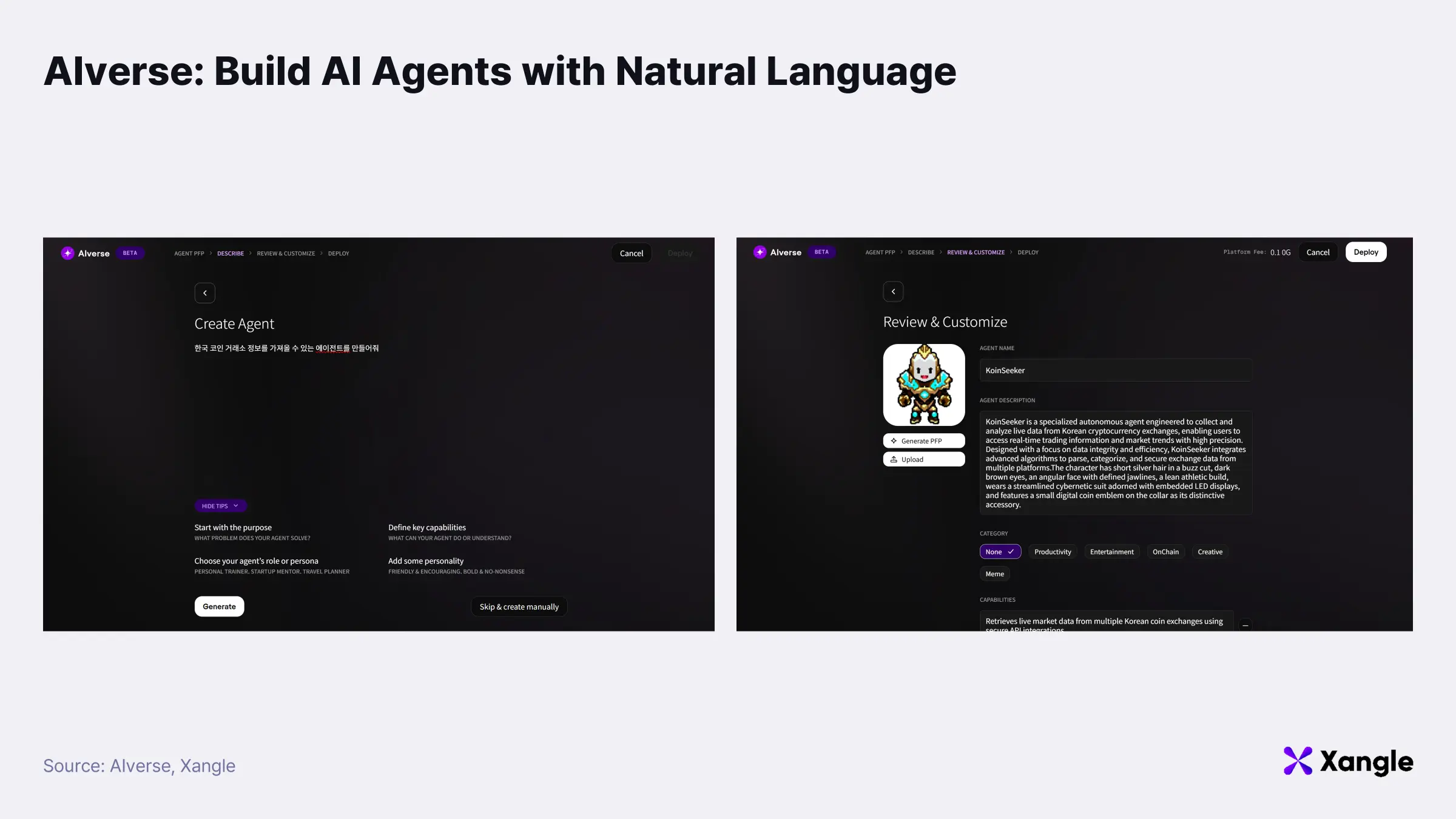

AIverse

AIverse is one of the flagship dApps within the 0G ecosystem, positioned as a no-code platform for creating and trading AI agents. Users can generate agents without programming knowledge and mint them as iNFTs (Intelligent NFTs), making them tradable on-chain. Rather than functioning as a simple creation tool, AIverse operates as a marketplace that links the creation, ownership, and exchange of AI agents into a unified on-chain economy. The platform is currently live on the 0G mainnet and is designed to support the trading of more than 120,000 Alignment Node iNFTs over time.

* iNFT (Intelligent NFT): A digital asset that represents AI agents as NFTs, enabling on-chain ownership and transfer. It is built on ERC-7857, a proprietary NFT standard designed specifically for AI agents.

Agent creation is designed to be lightweight and accessible. Users define purpose, capabilities, personality, and persona through natural language inputs, and an AI agent is generated within approximately 10 seconds. The resulting agent can be minted immediately as an iNFT, listed on the marketplace, or transferred to other users. This structure lowers the barrier to entry by extending agent creation beyond developers to general users.

At present, most agents on AIverse operate at the level of conversational interfaces. The longer-term direction is more ambitious: evolving toward autonomous, task-oriented agents capable of executing real workflows. Potential use cases include portfolio management, research summarization, automated workflow execution, and integrations with external tools across both on-chain and off-chain environments.

Core functionality is currently anchored on the 0G Chain. Agent creation, iNFT minting, transaction records, and marketplace settlement are all processed on-chain, ensuring that ownership and transaction history remain transparent and verifiable. Integration with other 0G infrastructure layers is expected to expand over time. 0G Storage is intended to handle agent configuration data and metadata, 0G Compute to support model inference and execution, and 0G Data Availability to validate data related to agent transactions.

The overall design reflects a modular architecture, where storage, computation, and verification are handled in separate layers. This separation allows the system to scale without introducing significant performance bottlenecks.

In effect, AIverse brings together the creation, ownership, trading, and execution of AI agents within a single framework built on 0G’s modular infrastructure. The longer-term objective is clear: to serve as foundational infrastructure for an emerging agent-driven economy.

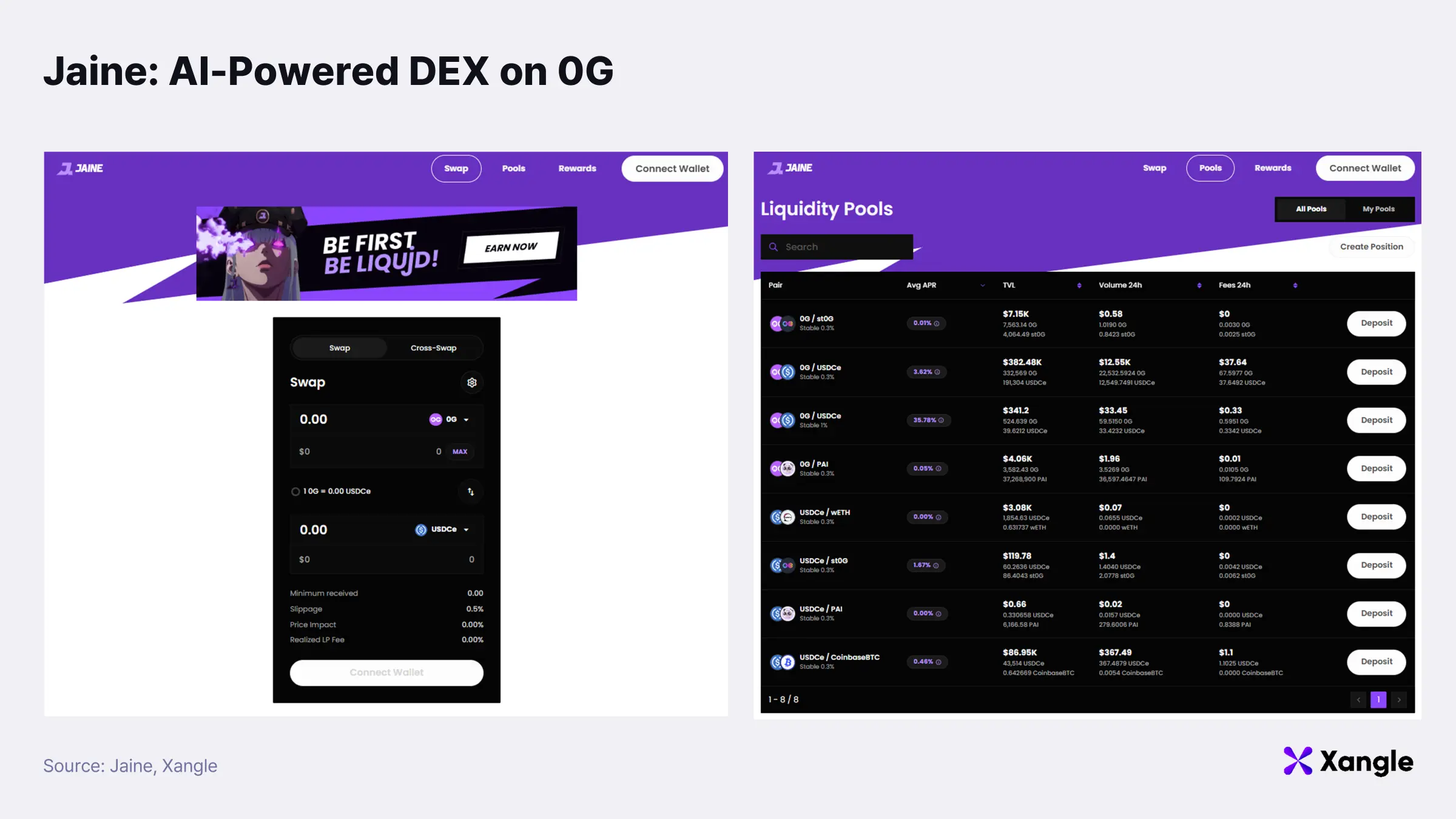

Jaine

Jaine is one of the flagship DeFi applications within the 0G ecosystem, positioned as a decentralized exchange (DEX) that integrates AI-driven automation. Since launch, the platform has recorded over 200,000 on-chain transactions, with daily activity ranging between 2,000 and 5,000 transactions, reflecting consistent usage within the ecosystem.

At its core, Jaine is built on a CLMM (Concentrated Liquidity Market Maker) design. By concentrating liquidity within defined price ranges, the model improves capital efficiency and has become increasingly common across DeFi protocols. On top of this structure, AI-based automation enables agents to execute trading and liquidity management strategies on behalf of users. Rather than manually executing each transaction, users define strategy parameters while execution is handled programmatically by AI agents.

Development prior to 0G spanned multiple high-performance blockchain environments, including Ethereum, Polygon, and Sonic. The decision to adopt 0G’s modular architecture reflects a longer-term focus on scaling AI-native applications. Core functionality is currently deployed on the 0G Chain, where swap execution, liquidity management, agent activity, and transaction records are processed on-chain.

Integration with additional infrastructure layers is expected to expand over time. 0G Data Availability is designed to handle high-frequency market and trading data, 0G Storage to support large datasets and agent execution outputs, and 0G Compute to enable model inference and agent-level computation. This separation of data processing, computation, and verification into distinct layers provides a structural foundation for maintaining performance as the system scales.

The longer-term direction extends beyond a conventional DeFi protocol. Jaine is positioned to evolve into an automated trading infrastructure where AI agents directly execute on-chain financial activity. Planned expansions include multi-swap modules, user on-ramp functionality, and ERC-7857-based iNFT collections, with the broader objective of enabling fully automated strategy execution. Positioning follows naturally from this trajectory. Jaine is not simply a DeFi application, but an early example of AI agent-driven financial infrastructure.

Kult Games

Kult Games is an on-chain game browser platform being developed within the 0G ecosystem, designed to connect multiple games within a unified environment. Unlike conventional games that operate as isolated systems, Kult introduces a model where a player’s identity, inventory, and achievement history persist across games. Players do not restart progression when switching titles; assets, records, and accomplishments carry forward across the broader ecosystem.

Earlier infrastructure relied on AWS, Firebase, and proprietary servers. While functional, this approach led to fragmented data across individual games and increasing system complexity. Cross-game asset sharing, unified identity systems, and persistent progression were difficult to implement under such conditions. The shift to 0G reflects a structural decision to address these constraints. Its modular architecture integrates execution, storage, data availability, and compute into a single framework, providing a more coherent foundation for multi-game interoperability.

ZeroGPool, Kult’s first title, offers a practical example of how this architecture is applied. Game logic and reward distribution are executed on the 0G Chain with latency below 100 milliseconds, while player profiles and inventory data are retrieved from 0G Storage in approximately 120 milliseconds. Match data and replay information are recorded on the 0G Data Availability layer, enabling verifiable game records as well as on-chain leaderboards and anti-cheat mechanisms. Planned extensions build on this foundation. 0G Compute is expected to support AI-driven matchmaking, NPC behavior, and personalized recommendation systems. ERC-7857-based iNFTs are intended to introduce evolving player avatars and in-game assets, extending persistence beyond static ownership.

Kult is better understood not as a standalone game, but as an attempt to build an on-chain gaming ecosystem where multiple titles operate within a shared system. Built on 0G’s modular infrastructure, the design enables real-time execution, unified identity, and cross-game asset continuity, with the longer-term aim of supporting a persistent, evolving game universe.

.0G Domain

0G introduced the native on-chain domain “.0g” in collaboration with SPACE ID. The domain functions as an identity layer on the 0G chain, replacing wallet addresses with human-readable names for both users and AI agents. Each .0g domain is directly mapped to an EVM wallet address, allowing asset transfers, application interactions, and identity verification to be handled through a single identifier.

Most AI systems today rely on platform-bound identity models built around accounts, API keys, and service-specific user IDs. Under this structure, an agent’s identity remains tied to a single service, making it difficult to maintain continuity across different applications or networks. Transferring assets or interacting with external services typically requires additional account integrations or intermediary layers, creating structural constraints on agent-to-agent interaction and automated workflow execution.

The .0g domain addresses these limitations by introducing an on-chain identity layer directly linked to wallet addresses. Each domain is mapped to an EVM wallet on the 0G chain, allowing users and AI agents to operate through a single, human-readable identifier across applications. This design enables more seamless agent-to-agent payments, user–agent interactions, and cross-service workflow automation, while providing a consistent identity and addressing framework for agents operating across multiple environments.

The domain system is part of a broader identity framework within the 0G ecosystem, used alongside .agi for applications and .robot for agent identifiers. Together, these domains form a unified identity infrastructure for an on-chain AI ecosystem involving users, applications, and agents. Minting is currently available through the Space ID 0G domain service, with 407 domains registered to date. Domains are issued on a yearly basis, with shorter names priced at a premium. Secondary market trading follows the same model as other blockchain-based domain systems.

Flashback

Flashback is an AI-powered memory platform designed to record and preserve personal voice-based memories, positioned as a privacy-focused application where users retain direct ownership of their data. Users narrate memories through voice, which are then structured by AI and stored as “Memory Orbs” that can be revisited or reused at any time. Unlike conventional memory applications that rely on centralized storage, Flashback is built on an architecture where user data is stored on-chain.

In January 2026, the system migrated from its previous IO.NET-based infrastructure to 0G’s modular blockchain stack. The shift reflects a clear design choice: storing sensitive data such as personal voice records without relying on centralized servers. By combining storage and computation within a decentralized environment, 0G provides the foundation for a model where data ownership is held at the user level.

Post-migration performance and cost metrics show meaningful changes. Approximately 802 wallets have been created, with more than 9,900 on-chain transactions recorded. Over 3,300 files (around 519MB) have been stored on-chain. Infrastructure costs are reported to be reduced by roughly 70%, AI inference costs by around 90%, and storage costs by more than 50% compared to centralized alternatives.

The system currently operates across 0G Chain, 0G Storage, and 0G Compute. Voice records and datasets are stored in 0G Storage, while 0G Compute handles the AI processing required to structure and organize memory data. Future development is oriented toward agent-based extensions. An AI Twin feature based on ERC-7857 iNFTs is expected to enable agents that evolve from user memory data. The longer-term objective is more structural: building a decentralized AI memory layer where users fully own and manage their personal data.

Additional ecosystem applications can be explored through the 0G Hub.

2-3. Ecosystem Partnerships and Programs

0G is expanding its ecosystem through a series of partnerships that extend beyond infrastructure development. Recent collaborations include Anchorage Digital, Pyth Network, and EigenLayer, each contributing to different layers of the stack, from institutional custody and on-chain price oracles to restaking-based security. The significance lies in how these partnerships are positioned. Rather than functioning as surface-level integrations, they form part of a broader effort to establish the foundational layers required for AI applications to operate, including data, security, and financial infrastructure. This positioning reflects a shift from a standalone AI-focused blockchain toward a more comprehensive infrastructure network designed for real application deployment.

0G is also expanding its developer ecosystem through a range of builder and accelerator programs. The $88.88M Ecosystem Growth Program provides grants and technical support for decentralized AI application development, covering areas such as agent-based services, on-chain data markets, AI-driven DeFi, gaming, and metaverse environments.

As part of this initiative, Guild on 0G targets early-stage teams. The program, sized at approximately $8.88M, offers grants, gas credits, technical support, and marketing resources to help projects transition from testnet to mainnet. The focus is on enabling teams to build and deploy AI-based dApps on 0G quickly and onboard into the ecosystem. Applications are currently open at Guild on 0G.

At a later stage, the Apollo AI Accelerator targets more mature teams. The program, valued at approximately $20M, is operated in collaboration with the Blockchain Builders Fund (BBF) linked to the Stanford ecosystem. Selected teams can receive up to $2M in funding, $200K in Google Cloud credits, and support for Privy wallet infrastructure, alongside a 10-week mentorship program and demo day exposure to investors and partners. The focus remains on applications at the intersection of AI and blockchain, including agent systems, AI-powered DeFi, and on-chain data markets. Applications are open via Apollo AI Accelerator.

The overall structure is layered rather than fragmented. Funding, early-stage incubation, and later-stage acceleration are aligned to support projects across different stages of development. This approach positions 0G to systematically attract developers and startups, while expanding the decentralized AI application ecosystem in parallel.

2-4. 0G Hub: On-Chain Metrics and Activity

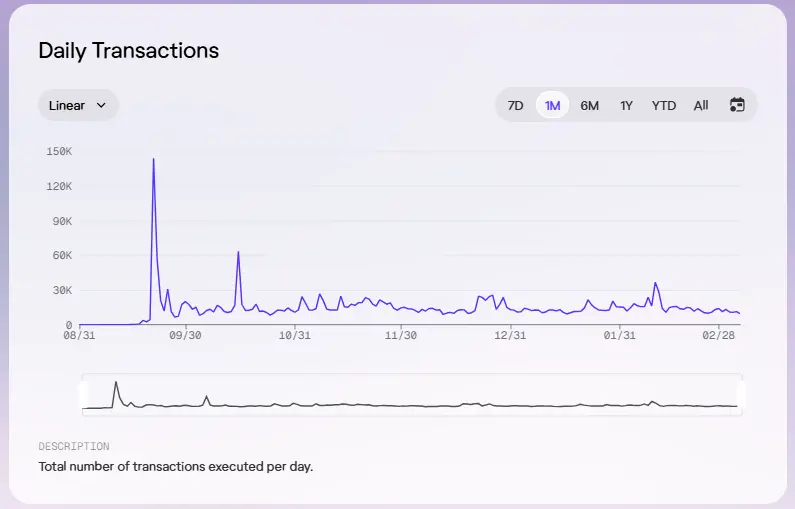

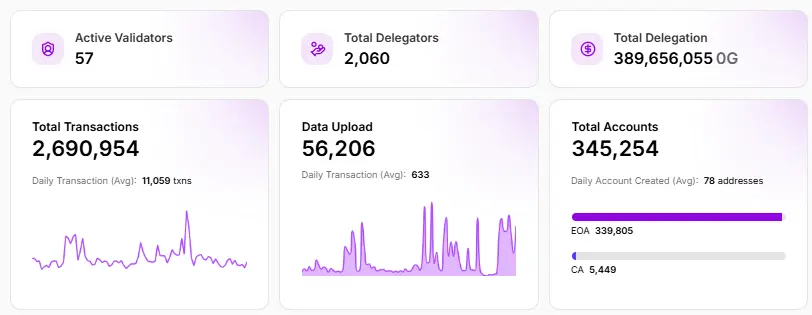

Following its mainnet launch in August 2025, on-chain activity has scaled quickly. Within roughly seven months, the network reached 345,000 unique addresses, 2.69 million transactions, and more than 5,400 deployed smart contracts.

Transaction activity has remained consistently elevated. Recent averages range between 10,000 and 15,000 transactions per day, suggesting sustained network usage beyond the initial TGE phase.

Network security metrics point to a stable validator set. A total of 57 validators are currently active, with 2,060 participants delegating $0G tokens. In aggregate, 389,656,055 $0G is staked, equivalent to approximately $222 million contributing to network security as of March 10, 2026.

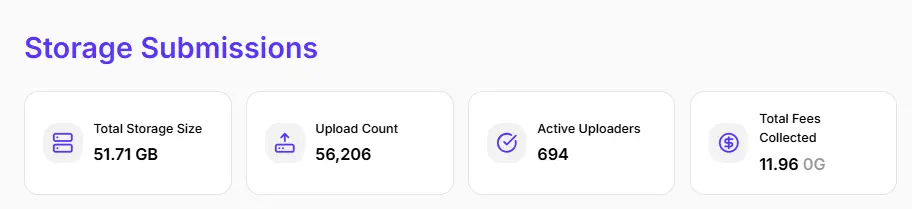

Storage usage is also trending upward. To date, the network has processed 56,206 file uploads, representing approximately 51.71GB of stored data. As ecosystem applications such as AIverse and Jaine deepen their integration with 0G Compute and 0G Storage, both data usage and overall network activity are likely to scale further.

3. Conclusion: Infrastructure for the Agent Economy

The key challenge in the AI agent market is no longer about building better models, but about establishing infrastructure that allows agents to operate reliably and cost-efficiently in real-world environments. AI agents are already expanding across sectors such as finance, gaming, data, and automation. However, structural constraints including high inference costs, limited verifiability, and difficulty in tracing accountability continue to limit their transition into large-scale production systems. In this context, decentralized AI infrastructure should be understood not as an alternative compute layer, but as a new infrastructure layer for execution, verification, and state recording in agent-driven systems.

0G stands out by moving beyond positioning itself as “a blockchain for AI” and focusing on building a modular infrastructure stack capable of supporting real applications. Parallel consensus-driven scalability, verifiable execution through TEE and ZKML, competitive inference costs, and the expansion of ecosystem applications all indicate that the approach is being implemented rather than simply described. Long-term outcomes will depend on adoption. The key question is whether real usage and on-chain demand can scale alongside the infrastructure. At this stage, 0G provides a clear view of the direction infrastructure is likely to take in the AI agent era.